Table of Contents

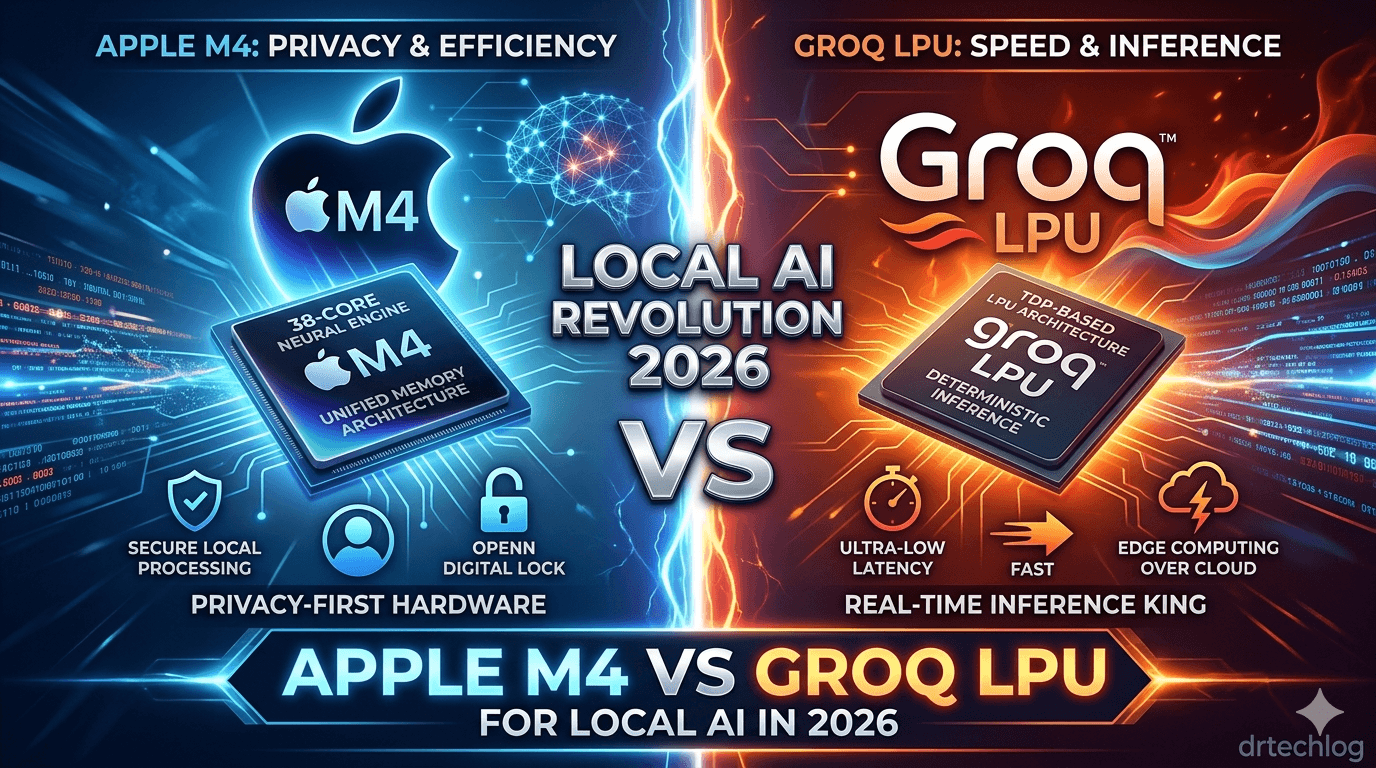

The tech world is at a breaking point. While upcoming operating systems like Windows 12 and Android 17 promise “AI everywhere,” users are facing a silent crisis: Latency and Privacy. Relying on massive cloud servers for every minor AI task is no longer sustainable. This is where the “Hardware Wars” of 2026 begin. In this comprehensive guide, we break down why the Groq LPU and Apple’s M4 chip are not just incremental upgrades, but essential tools for the on-device AI revolution.

The Latency Problem: Why Cloud AI is Failing You

Most users currently experience AI through a frustrating delay. Whether it’s waiting for a ChatGPT response or a Gemini agent to process a command, the “round-trip” to the server is the bottleneck. For developers, this latency is more than just an annoyance; it leads to critical performance issues. In fact, failing to manage these background tasks is a primary cause of ANR (App Not Responding) errors on Android, a problem we’ve covered extensively for those looking to optimize their apps.

1. Groq LPU: The King of Real-Time Inference

While Nvidia dominates the “training” of AI models, Groq’s Language Processing Unit (LPU) is winning the “inference” game (the speed at which AI actually answers you).

- The Architectural Shift: Unlike traditional GPUs that struggle with the sequential nature of Large Language Models (LLMs), Groq’s architecture is deterministic. It allows for near-instant text generation.

- Real-World Value: Imagine running a local coding assistant that suggests 500 lines of code per second without an internet connection. As we noted in our analysis of top technology trends for 2026, speed is the new currency in the AI era.

2. Apple M4: Bringing Neural Power to the Masses

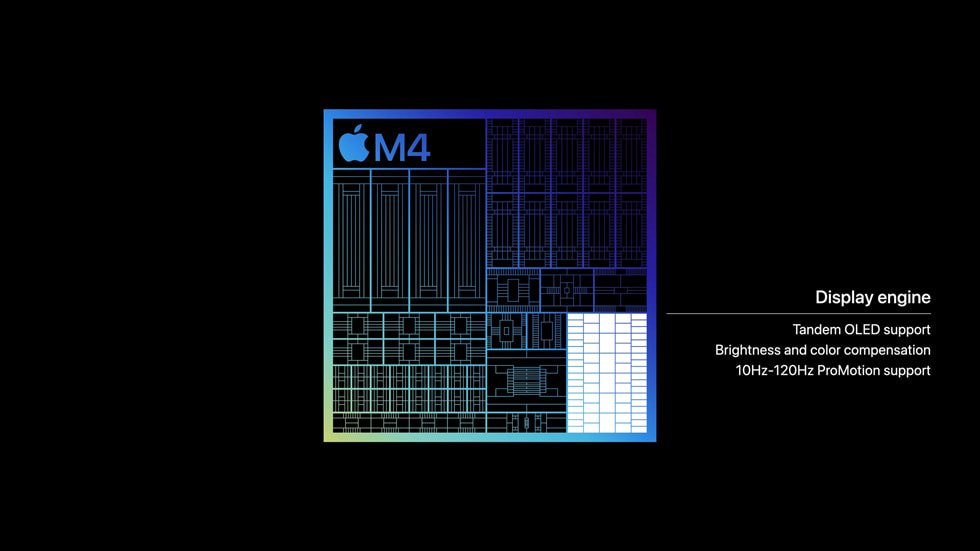

Apple’s M4 chip, the heart of the latest iPad Pro and MacBooks, isn’t just about benchmarks; it’s about the Unified Memory Architecture (UMA).

- The Edge: By integrating the CPU, GPU, and a massive 38-core Neural Engine, the M4 can handle complex AI models like Llama 3 locally with zero lag.

- The Privacy Solution: This is a game-changer for professionals. Lawyers, doctors, and researchers can now process sensitive data without uploading it to a third-party server. This hardware shift aligns perfectly with the privacy-first features expected in Google I/O 2026 and Android 17.

Comparison: Groq LPU vs. Apple M4

| Feature | Groq LPU | Apple M4 (Neural Engine) |

| Primary Use | Enterprise Speed / LLM Inference | Consumer Privacy / Content Creation |

| Latency | Ultra-Low (Milliseconds) | Low (Real-time local) |

| Accessibility | Workstation / Cloud Integration | Built-in to Mac/iPad |

| Best For | Developers & Heavy LLM Users | Creative Pros & Privacy Enthusiasts |

Practical Takeaway: How to Choose Your Next Device?

If your workflow depends on Generative AI speed and high-volume data processing, look for LPU-integrated cloud workstations. However, if your priority is Data Privacy, Portability, and Mobile Efficiency, the Apple M4 ecosystem is currently unbeatable for the average professional.

Conclusion

The future of tech isn’t in a distant data center; it’s in your pocket and on your desk. By shifting to local AI processing, we aren’t just gaining speed—we are regaining control over our digital lives. As we move further into 2026, the gap between “Cloud-First” and “Local-First” will only widen.

Sources

- Apple Newsroom (M4 Chip Announcement): https://www.apple.com/newsroom/

- Groq Official (LPU Technology): https://groq.com/lpu/

- The Verge (Future of On-Device AI): https://www.theverge.com/ai-artificial-intelligence

- Ars Technica (Hardware Deep Dives): https://arstechnica.com/gadgets/

Discover more from Doctor Tech Log

Subscribe to get the latest posts sent to your email.